Did he pinch zoom you? You can tell us, this is a safe space.

This is also true of most smartphone apps, for example all UI operations are single threaded.

The same logic applies everywhere; unless the program’s author has gone to great lengths to make every action happen in a background thread – which also implies they’ve written the code to wait for all those actions to complete, sync up and merge the results – then single threaded perf will be a very accurate estimate of overall performance.

And it is.

Multi-threaded programming is not just hard to apply to many problems, it’s hard period.

Algorithms for splitting rendering work between processors], as well as the thread synchronization that goes with the progressive effect rendering, is easily the most complex code in Paint.NET. It’s worth it though because this gives us a huge performance boost when rendering effects.

Rendering graphics effects is one of the cases where the work can be easily split up, that is, you divide the screen into (x) sections and have (x) processors work on each of those sections.

Poked head in to see what was happening, mostly wumpus’s crusade.

In other words, nothing to see here.

Eh, this is really not true. I mean, certainly automatically breaking big tasks up into parallel ones is no trivial undertaking, but I think you are overstating the difficulties of multithreaded programming.

The very nature of all user interface operations taking place on a single event dispatch thread effectively NECESSITATES use of multiple threads, because you never want to do large operations directly on that thread. You always spawn separate threads for that kind of stuff, so that the main interface doesn’t lock up.

You generally always have multiple threads going in most Android (or Java in general) for all but the most trivial of applications.

Well, I’ve been working in software for 30+ years, and I’m here to tell you that you may have contracted … “shit’s easy syndrome”.

Feel free to read up:

Probably the best near term solution is built in data structures and facilities that use multi-threading under the hood. That is good and solid and necessary, but it’s no magic bullet, not by a long shot.

Many if not most problems simply aren’t amenable to multi-threaded solutions – ideally you need the work to be perfectly divided up into sections, where each section has no dependency of any kind on the other sections. Video rendering is like this, as are graphics effects (photoshop filters), a lot of database queries, webservers where each core can service an individual user request, etcetera. The clear wins are almost always on the server versus the client, because the server is doing more work for more people, and that’s easy to break up into completely independent chunks of work.

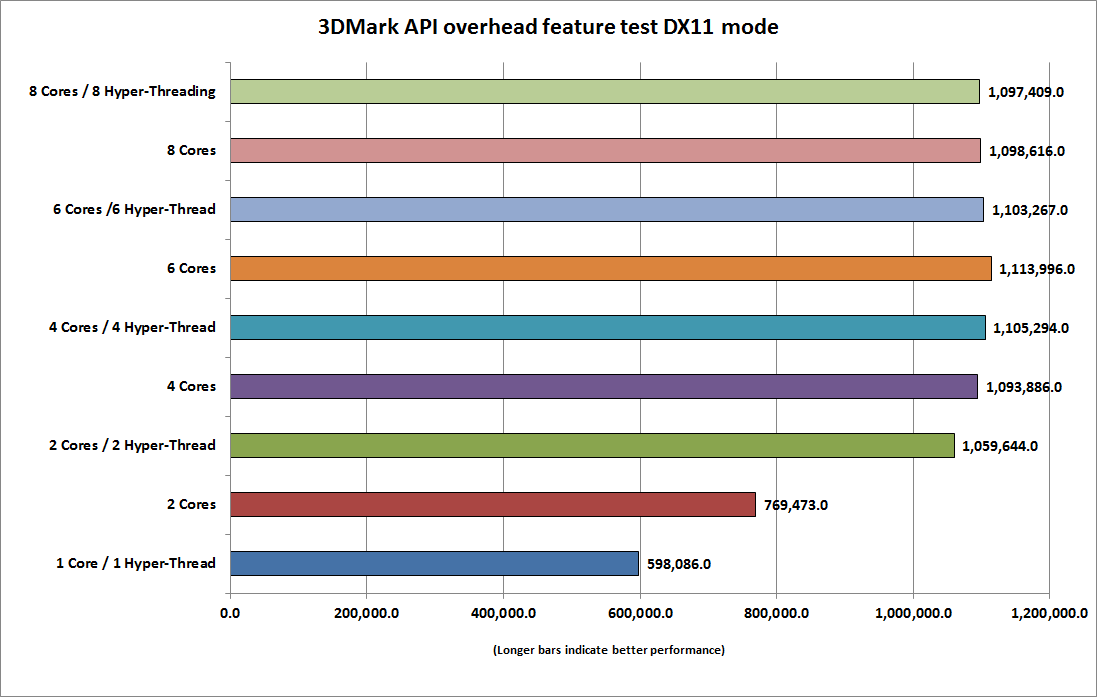

And even when it does scale… it is difficult to scale cleanly to 4, 8, 16, 32:

It is funny to realize that hyperthreading is basically Intel’s way of telling programmers they suck at writing multithreaded code. And they do, because it’s really hard.

I’m just speaking from my own 15 years experience, where I do multithreaded progamming pretty routinely.

I mean, maybe I’m super genius, but I don’t think so.

Yeah, but we aren’t taking about making a parallel solution to a single problem. We aren’t parallelizing an algorithm.

We are writing a software application, where there are generally lots of things going on at the same time.

Like I said man, anyone who has done any significant application development uses threads all the time. Certainly you in your 30 years of experience have done this. You essentially HAVE to do it for applications to not have terrible performance in terms of UI sluggishness.

Nope. Rarely at best. Not unless the primitives you use are doing it for you behind the scenes.

We didn’t do it when building Stack Overflow. And we didn’t do it when building Discourse. The most “threading” we would do is have stuff occur on a background scheduler… say, run the query to award certain badges every 5 minutes, this data cleanup task every day, another data synchronization task every week, etc. There is a lot of that, and I guess it is a sort of ghetto “multi-threading”, just adding tasks to the scheduled task queue. When you get the badge, we add a notification to your notification queue.

No, because if the db query is gonna take 1.5 seconds, what exactly is there to do while waiting for the data the user requested? Have the user play tetris? If you click on a topic here in Discourse, what exactly are we going to show you while you are waiting for the actual topic data to arrive? We could show you generic placeholders, and a spinner…

Now if the user asked for 10 different things, you could gather all 10 in parallel and then merge them together visually in real time as the data arrives, “here’s the info on the blue widgets you requested”, “now adding the red widget info”, “now adding the green widget info” etc.

Sort of like when searching for flights on Expedia, it’ll show some data as it comes in, then rez in more as it arrives.

If I wasn’t on my phone and about to go to sleep I’d link Wumpus to page 3 of the android developer documentation which is entirely about not doing anything expensive on the UI thread and how extremely important that is and how it’s literally the architectural framework of every android app ever. (Loopers, handlers, asynctask etc). If you don’t you’ll get ANR and android will eat your task.

Well yes, but that’s just so the spinner can smoothly animate while you retrieve the data. Has nothing to do with the substance of the question.

So say you have 2 cores, and you’re browsing QT3, while downloading donkey porn. Is that better than having 1 core?

Don’t know what to tell you, man.

You literally have to do it in any Java application when you are doing heavyweight operations off of the event dispatch thread, otherwise the interface locks up.

Certainly in the behavior modeling work I do, the amount of threading is more than normal developers would encounter, but in the IDE application development work I’ve done, even that uses threading a lot.

Again, not taking about the kind of stuff you seen to be alluding to, which is being down huge tasks into a ton of parallel pieces to leverage parallel processing. Just talking about normal application development where you have various independent tasks that don’t rely in each other, and thus can be easily separated off into threads. One is hard, the other is routine.

Yeah, that’s not really kosher, at least in Java. That’s why the sun docs specifically talk about not doing large operations on the EDT, and why they have various helper classes specifically designed to merge the with of different threads into the EDT stuff. Anyone who worked with Swing, for instance, basically had to understand this stuff.

Just as a super simple example, you have menu item that does some big operation like saving a large file. If you do that on the EDT, directly on the stack from the menu listener, then the entire UI is going to freeze until that operation is done. That sucks. Even in cases where you don’t want the user to do other stuff, you still don’t usually just want to use the EDT for that, because it gives the impression that the application is broken, since it doesn’t respond to anything at all.

And in those kind of tasks, they are independent from the other stuff the user is doing. It’s easy to put them into a separate thread. There’s no reason to make the user wait, since it’s not going to impact the next thing they do.

Now, with the complex simulation environments I work with, the threading can potentially be more complex, but only the absolute oldest ones are single threaded. All the modern systems use multiple threads, because it lets us use the hardware more effectively. And because there are generally different areas of the application which can be largely severed from each other. The synchronization pieces are not trivial to make work, and all threads are not using at maximum load all the time, but multithreaded programming is a pretty standard thing.

That’s fine, but these are meaningless in terms of actual performance. All you get in return is “smoothly spinning hourglass” – zero performance improvement. And most notably, you’d achieve the same thing even with a single CPU. What we’re talking about here is actual wall clock time performance improvements based on breaking the work into segments and doing it on multiple CPUs simultaneously.

It’s kind of like the arguments you get into with people who insist they need a quad-core CPU so they can listen to WinAmp while they browse web pages.

Late to the discussion. But in this case we are talking apples vs oranges when it comes to multi threaded programming.

Enterprise multitreading is relatively simple because there is no UI involved. Events do not have to wait for human interaction or initiation. Functions are batched and take advantage of massive parallel programming. It happens all the time. And languages like Java (as pointed out by @Timex) simplified Thread programming as to make it almost trivial.

BUT in UI programming, multi-threading is NOT simple. The human user experience is built on a causal paradigm. I click this, I see that action. I do this, I expect that. Short of some uses cases involving data preparation, UI programming in single threaded model is orders of magnitude easier than a multithreaded one. It’s exactly as @wumpus had highlighted, what do you do when a user clicks something expecting a cause-effect to take place.

I read somewhere long time ago when GalCiv was a OS2 game, that they multithreaded the AI and UI separately. And it was an innovation at the time. But a lot of effort went into planning and executing that. When playing Civ6, when I click on Next Turn and have to wait for the AI thread twiddling my thumbs I am amused that we have still not figured how to do proper multithreaded games.

Game and Interaction heavy multithread programming is HARD.

Yeah, honestly, I don’t know of any UI toolkit which is actually multithreaded. I suppose there may be some?

Yea, I was trying to think of some. But came up empty. I think all the game engines on the market have a single game loop threading model.

Article from 2004 on the issues of making a multithreaded GUI toolkit which is interesting.

https://community.oracle.com/blogs/kgh/2004/10/19/multithreaded-toolkits-failed-dream

Also, unrelated, I hate oracle’s website, and I miss Sun.

Exact same sentiments.

Do you just discount any UI application development at all? I don’t even know where you could find a single threaded UI application on windows. Calculator has 32 threads. This stupid git UI thing in the screenshot below has 5 and it isn’t even doing anything other than sitting there and looking ugly. The dumbest little test tool we might have at work has multiple. Let alone any actual real server side code. Hell our quick analytics python stuff is all threaded. I can’t even tell if you’re serious that 2017 UI apps are not multi-threaded?

In a way that meaningfully impacts performance, not just letting the GUI stay updated with a responsive spinner / hourglass – which seems to be your interpretation.

To do that, the work has to be broken into independent sections, and each thread processes a section of the work, thus finishing faster as every CPU can bear a segment of the overall task at the same time, in parallel.

Stated another way, no, you don’t need two cpus to listen to WinAmp and browse the web at the same time.