There are several pathways by which drugs can be released to the public by the FDA. The usual approval route requires years of testing to demonstrate an actual benefit to patients. You may also be familiar with “Emergency Use Authorization”, in which lower standards are used for emergencies like COVID. Waiting years for formal approval of a COVID vaccine would be inappropriate for the clinical needs of the population. When drugs are released using a lower standard, the FDA makes much stricter demands regarding post-release surveillance and has a lower threshold for recalling the drug.

However, in the 1990s it became clear that for some clinical problems, neither a temporary measure (Emergency Use) nor a standard demonstration of clinical benefit was appropriate. The problem in question was HIV. As most people know, HIV can take a decade or more before it causes any clinical problems at all. That means that under the normal approval process, a new drug could require a decade of testing before it could even hope to show a clinical benefit. Especially if the drug was meant to act the earliest possible stage, when success is most likely.

It would be grossly inappropriate for the FDA to require any possible HIV therapy to be tested for 10+ years, given the lack of any other treatment for HIV. So they made a new category: Accelerated Approval. Unlike the standard approval, drugs could pass through Accelerated Approval even if they didn’t show a clinical benefit provided they showed benefit to “surrogate” markers. In the case of HIV, this meant CD4 counts, which constantly decline in patients with HIV until they finally come down with AIDS. If a drug could prevent CD4 counts from falling today, then maybe it could prevent AIDS ten years later. Like Emergency Use, this approval process is attached to stricter conditions regarding post-release surveillance. And so we finally turned the tide in the battle against HIV.

Well, Alzheimer’s today has some similarities to HIV in the 90s. First and most important, all current drugs are pretty ineffective. Next, patients have a gradual increase in certain biochemical markers, i.e. amyloid and tau. In addition, patients have few or no symptoms until amyloid has been building up for decades. At the point when patients finally report mild memory loss, amyloid has basically peaked and it’s quite likely that too much damage has already been done to alter the eventual course. That’s why current Alzheimer’s drugs are terrible, they are trying to patch up an amyloid-ravaged brain rather than preventing the damage to begin with.

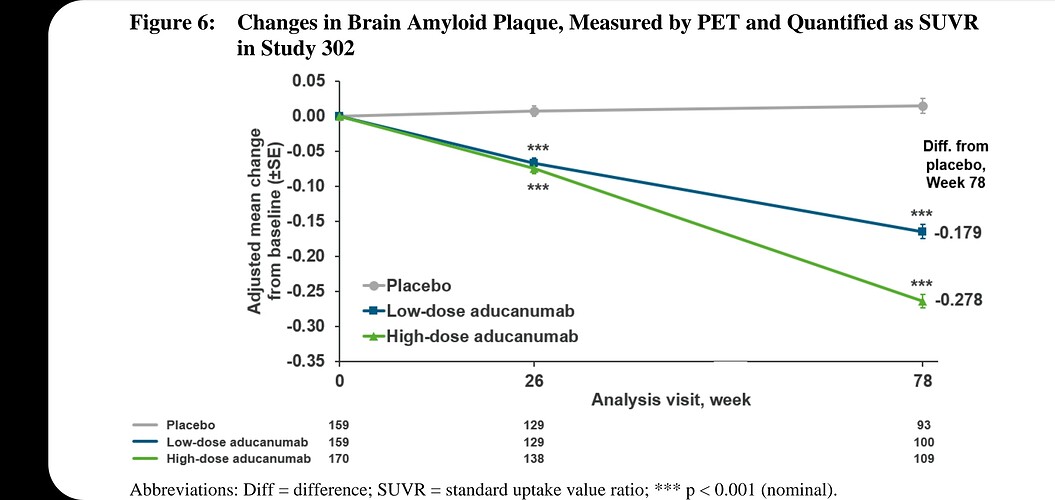

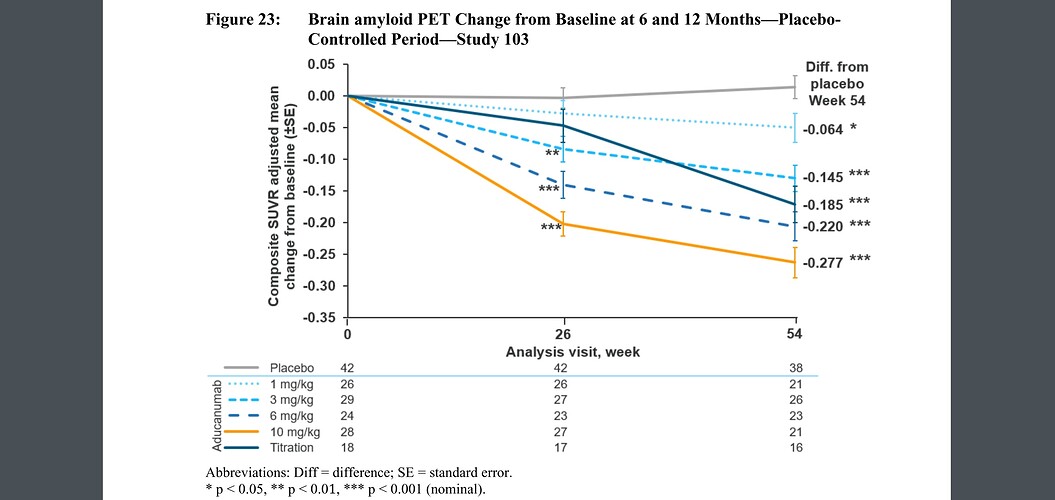

As in HIV, new drugs aimed at preventing Alzheimer’s should probably target surrogate markers (amyloid, CD4 counts) rather than trying to fix the end-stage clinical problems (memory loss, opportunistic infections). And this particular drug apparently is effective at getting rid of amyloid (see page 49 on the pdf you linked). But under the normal approval process, it was required it to demonstrate improvement in memory. Since it is meant to act at an early stage and the study didn’t last a decade, it hasn’t yet showed much effect.

Under the Accelerated Approval pathway, it only has to show that it reduced amyloid. And the FDA agreed that under those conditions, it could be approved.